Multivariate Testing vs. Split Testing: Which Should You Use?

Last updated on

by

Are you confused about whether to do split testing or multivariate testing on your web pages, emails and optins? It’s a common issue when you’re getting started with conversion optimization.

Most advice on conversion rate optimization tells you to test, test again and keep on testing. But it’s not always easy to understand what tests you should run and when to run them.

In this guide, we’ll compare split testing vs. multivariate testing. By the end, you’ll know the pros and cons of each type, some key mistakes to avoid and which type of test to use when. Then you’ll be able to get started on your testing plan so you get more conversions from your marketing strategy.

Split Testing vs. Multivariate Testing Definitions

Let’s start by defining split testing and multivariate testing. We’ll explain these in more detail as we go through the guide, but these brief definitions are a starting point.

Split testing is testing a control against a variation for a single element. The control is the original item, and the variation is what you change. In other words, you change one item on a page and see how the results for that page vary from the original version.

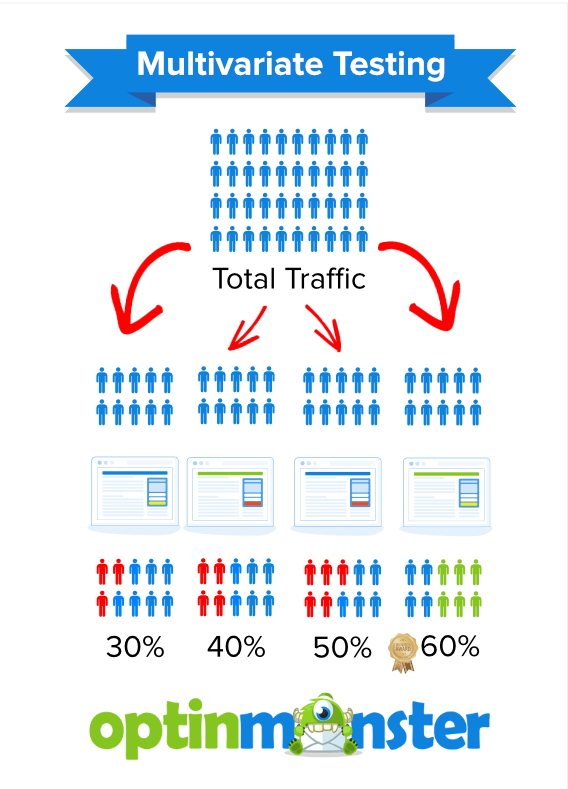

Multivariate testing is testing multiple combinations of items at once. In other words, you change several elements on a page, and one version may look radically different from another.

Understanding Split Testing

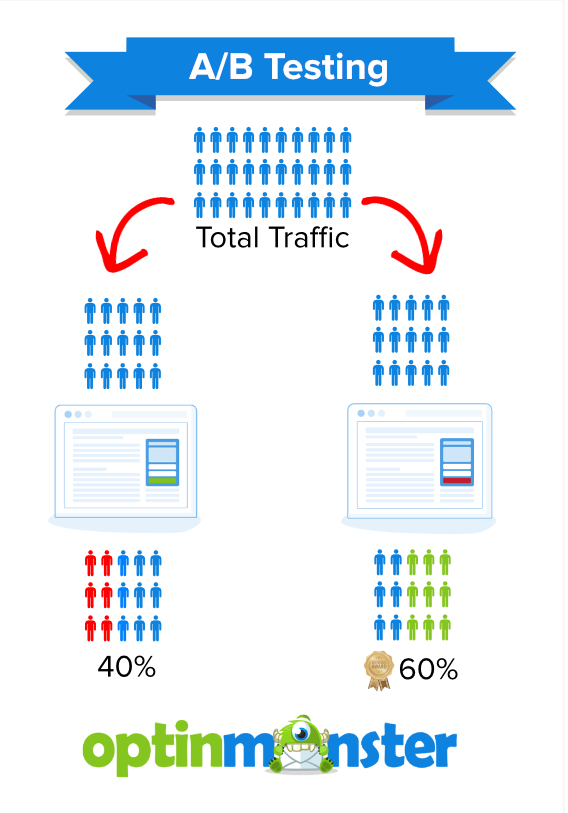

Split testing is also known as A/B testing. As we said earlier, it’s where you take an element on a web page like a call-to-action button, change it and then compare results for each version of the page.

You do that by splitting your traffic evenly, so that 50% of your visitors see the original version of the page, and the other 50% see the new one.

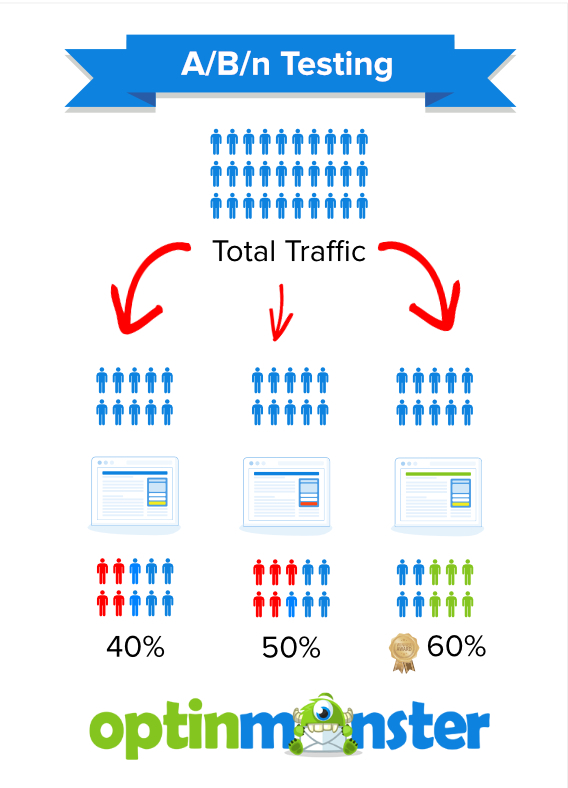

Another version of split testing is A/B/n testing. That’s where you test more variations of a single element, splitting the traffic evenly among them. So if you wanted to try four different versions of a call-to-action button, your visitors would be split in four, with 25% of the total seeing each variation.

Pros and Cons of Split Testing

There are a couple of advantages to using split testing as a conversion optimization tool. First, split testing works well even on sites with low traffic. So, even if you’re just starting to build your business, you can use split testing.

Second, split testing provides reliable data because the variables are small. In other words, since you’re only changing one element at a time, you get results quickly and they’re easy to measure.

But there’s also one disadvantage to using A/B testing: you can’t tell how different elements on a page interact. You might decide to change the text on your CTA button, but there might be something else about that page that affects how people respond to it. Split testing won’t let you measure that.

Mistakes to Avoid With Split Testing

If you’re going to run an effective split test, there are a few mistakes to avoid.

One of these is testing the wrong pages. If there’s no real opportunity for improvement or if nobody’s visiting a particular page, then there’s no point in setting up a split test. Similarly, if changing a page element won’t make any difference to the bottom line, why change it?

But if you have the chance to make a change that will get you closer to achieving your business goals, that’s when you run a split test.

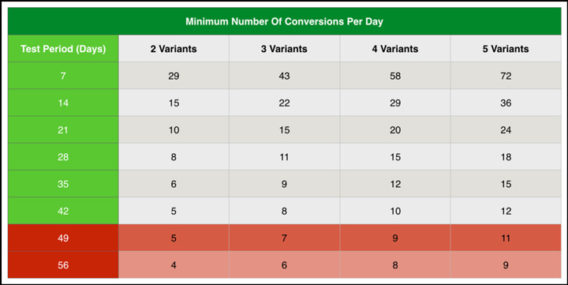

It’s also wise to avoid testing too many variations. While in theory, you could do this, in practice it would make the tests take too long. Most A/B/n tests work with two to four variations.

Another major mistake is not forming a proper hypothesis before you start testing. What’s a hypothesis? It’s an educated guess about what an issue is and how you can solve it, based on the data you have.

Creating a hypothesis allows you to figure out what you want to test and why, and to think about how you will measure your results.

Digital Marketer has an excellent template for creating a hypothesis:

Because we observed [A] and feedback [B], we believe that changing [C] for visitors [D] will make [E] happen. We’ll know this when we see [F] and obtain [G].

Here’s how this would look in a real situation:

Because we observed a poor conversion rate and visitors reported they couldn’t find the upgrade button we believe that making the button more prominent for all visitors will increase upgrade signups. We’ll know this when we see an increase in upgrade signups over a 2 week testing period and obtain survey data that shows that people can now see the button.

Timing is another common split testing issue. There are two aspects of this:

- Running your test for too short a time. If you haven’t tested for long enough, the results aren’t reliable and you can’t draw any firm conclusions. Digital Marketer has a chart that guides you to the ideal length for a split test.

- Running a split test at the wrong time. For example, if you usually get increased traffic to your eCommerce store before a major holiday, you can’t compare results with a test run at a different time of year. You need to compare like with like so you can trust the test results.

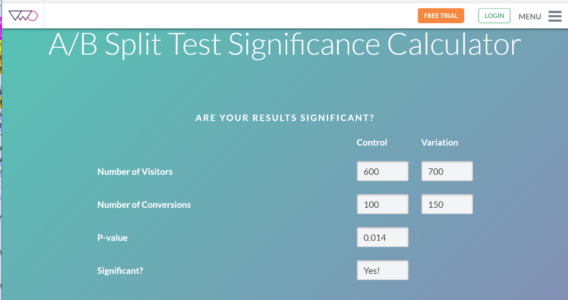

If you want to know if your split testing results are reliable, you can check for statistical significance. That’s a fancy way of saying you make sure the numbers add up, and VWO has a tool to help you do it.

It’s also important to be pretty sure any change will bring results. That’s called a confidence rating, and the industry standard is 95%. This tool from Get Data Driven will help you determine the confidence rating for your split test.

Understanding Multivariate Testing

As we’ve said, multivariate testing allows you to test multiple variations on a web page at once. According to Kissmetrics, tests with four variants lead to improvements 27% of the time, compared with just 14% with split testing.

There are several types of multivariate testing:

- Full factorial testing, which tests every possible combination of elements until there’s a clear winner. This needs a lot of traffic, and traffic is distributed evenly among the variations.

- Fractional factorial testing (often using the Taguchi Method), which uses a sampling method to test combinations and statistical analysis to decide on the winner. However, this means you are partially relying on assumptions, rather than data.

- Adaptive testing, which uses live data on visitor actions to decide on the winning combination.

Pros and Cons of Multivariate Testing

As a conversion optimization tool, multivariate testing offers several benefits. First, it’s faster than running a series of split tests as you’re able to change and evaluate multiple page elements at once.

Second, it helps you to see how different elements on a page interact so you can assess the overall impact. This can be great when redesigning a page, as you can test headings, page copy, buttons, images and forms all at once (though, as you’ll see below, there are consequences).

And you can also easily find out which elements contributed most to any increase in conversions.

However, there are also a few disadvantages to multivariate testing. For example, it won’t work for low traffic sites, because of the number of combinations you’re testing. You need to have at least 100,000 visitors a month to even consider it.

That also applies to individual pages. If a page isn’t getting enough traffic, there’s no point in running a multivariate test on it.

In addition, the more element combinations you test, the longer the test will take. If you decide to test three elements like your headline, call to action button and image, that already gives you eight combinations to test. If you test more elements, testing time and the traffic needed also increase.

Mistakes to Avoid With Multivariate Testing

As with split testing, don’t use multivariate testing without a hypothesis. You may be testing multiple elements, but you still need to have some idea about the results you expect.

As mentioned earlier, avoid testing sites that don’t have enough traffic to make multivariate testing worthwhile. On those sites, it could take a very long time to get enough reliable multivariate testing statistics.

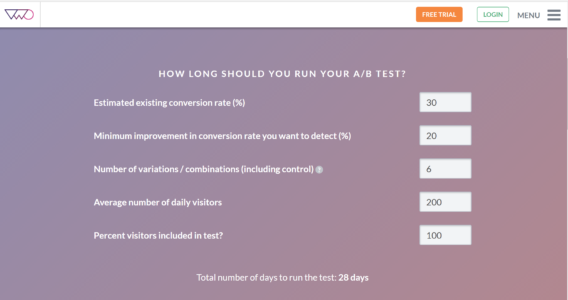

Don’t run your test for too short a time. The more variations you have in your test, the more time it will take and the more traffic you will need. Use this VWO duration calculator to do the math.

That calculator will also help you determine if your sample size is large enough, as not testing with enough traffic is another multivariate testing mistake.

It’s important to choose elements that are likely to have an effect on conversions. As mentioned with split testing, there’s no point in testing something insignificant.

Finally, don’t use multivariate testing to test individual elements. You’d be better off using an A/B test for that.

Which Type of Testing Should I Use When?

So, what’s the best type of testing for you to use? The good news is you don’t have to choose between multivariate testing and split testing; you can use both. Split tests are quick and you can get bigger gains when you use them. Multivariate testing lets you get an overview of multiple changes, then you can use split testing to fine tune individual elements.

ConversionXL recommends that you use split testing to find the best layout for a page and multivariate testing for tweaking the interaction of different page elements.

Whichever type of testing you use, you’ll want to follow this testing cycle:

- Identify the problem, based on data.

- Form a hypothesis as to what the cause of the problem is.

- Think of a possible solution.

- Test using either split testing or multivariate testing or both.

- Analyze see what your results are.

- Start the cycle again.

Tools for A/B and Multivariate Testing

Finally, here’s a quick list of A/B and multivariate testing tools you can use:

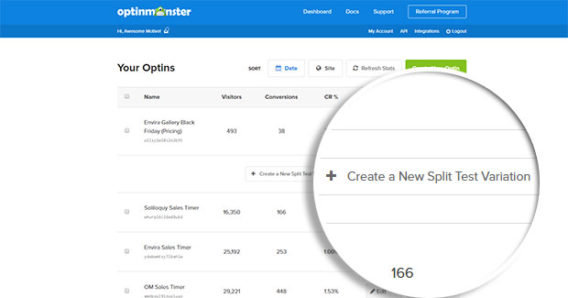

And, of course, you can split test your OptinMonster optins to see which version is most effective.

Now you know the difference between split testing and multivariate testing, so you can get started with these tests as a conversion optimization tool. You can also use split testing as part of email marketing. Don’t forget to follow us on Twitter and Facebook for more free guides.

Disclosure: Our content is reader-supported. This means if you click on some of our links, then we may earn a commission. We only recommend products that we believe will add value to our readers.

Add a Comment